TECHNICAL WRITING

Beyond the PR: Shifting Review into the Coding Session

February 26, 2026 · Peter Rallojay & Noah Mitchem

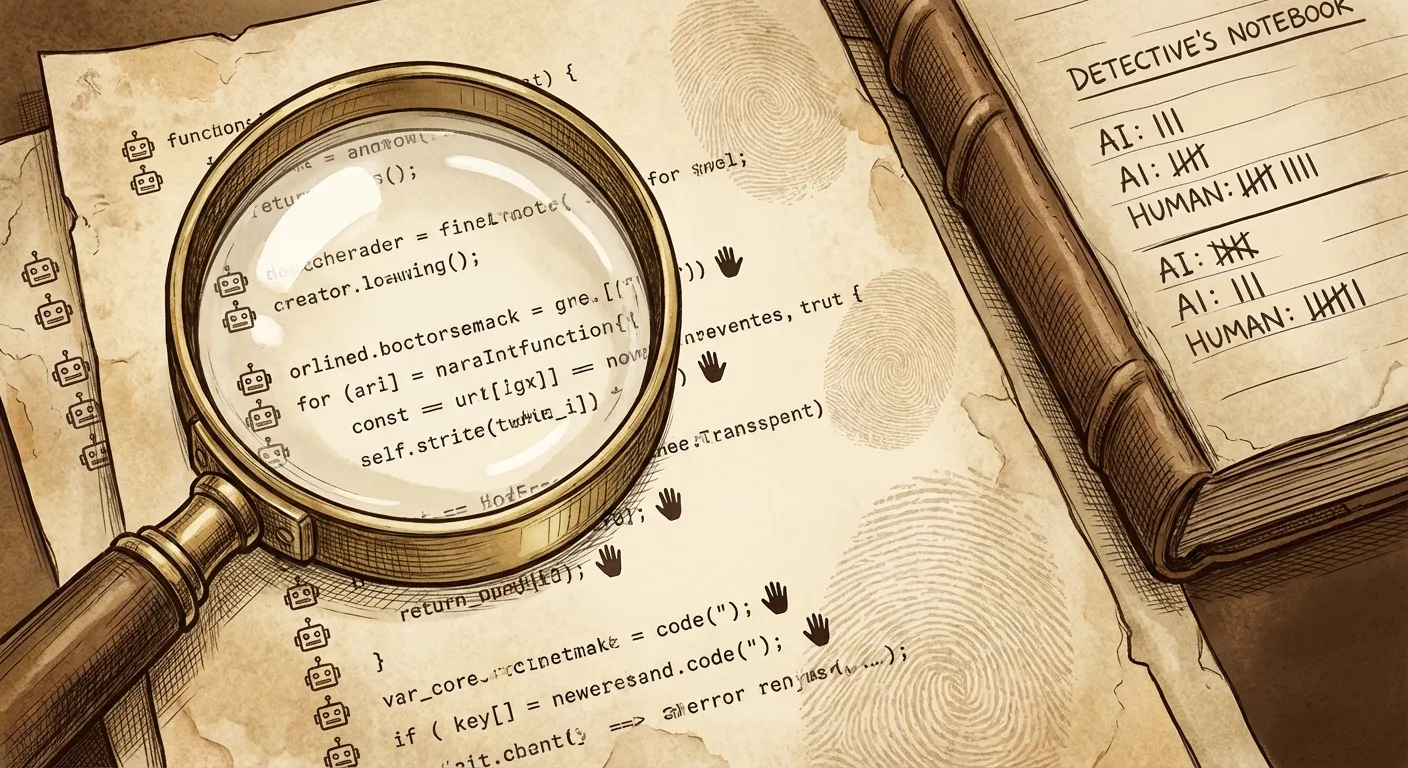

For months my workflow followed a predictable pattern. I would prompt an agent to produce a block of code and while manually looking through the changes, I’d run a parallel review by switching tabs in Claude or OpenCode to "Reviewer Mode" for a critique. I'm assuming lots of devs do this today and like me find it frustrating in some ways. This flow led to the inception of Mesa Code Review 2.0.

Noah and I started with a simple assertion: Code review is not a gate you pass through after writing code. It is a constraint that shapes the code as it’s written.

The "Reviewer Tab" Dance

Tabbing to code reviewer goal was to work effectively with these agentic tools, but the staggering volume of code they produce is simply overwhelming. Even while reviewing in parallel, the nondeterministic nature of these models created a bizarre secondary loop.

The Reviewer agent often flagged issues that were technically dense. These moments forced me to stop and verify patterns that I simply did not have at the top of my mind. In other cases the feedback was confusingly specific to a context the agent could not quite grasp. There were also times when it would flag a pattern we use canonically throughout our codebase. It would claim the code was "wrong" based on a generic internet standard, even though it was our established way of working.

I did not always have the immediate context to verify these claims on the fly. This led to an inefficient loop where I would switch back to the original "Coding" agent and ask: "The reviewer says this is a violation. Is that actually true for us?"

This is an inefficient flow. Even though the review happened "in context" we were still spending massive amounts of cognitive energy parsing noise. If we are going to survive the era of agent-generated code we need to adjust our workflows. The review should happen while the code is being formed, rather than as a separate conversation after the fact.

The Power of Determinism

Mesa Code Review 2.0 is about shifting the timing and making "in context" reviews grounded in truth. We aren't saying asynchronous review is bad or wrong; we’re saying that waiting until the very end, after a PR is created, is the most expensive moment to find an error. Mesa Code Review 1.0 does an excellent job of reducing this cost, but we know we can do better.

The goal Noah and I set was to move from "Review as a Conversation" to Review as a Constraint. By bounding the review to deterministic, codebase-specific rules that are statically defined we fundamentally change the math of the workday:

More Deterministic = Less Noise = Less Cognitive Load = Higher Confidence.

We no longer have to worry about parsing various vague concerns from a general LLM. I know that if Mesa speaks, it’s because a specific, version-controlled rule was violated. If the system stays silent, the code is clean.

The Architecture of Silence

To achieve this Noah and I moved the engine into a local Synchronous Stop Hook. In our 2.0 architecture, when an agent like Claude Code finishes an edit, the review triggers immediately.

Speed is the primary constraint. We optimized the engine to run in a tight, deterministic loop that minimizes "thinking" time:

- Mesa handles context gathering like a linter. We use glob patterns and dependency analysis to pinpoint relevant rules before the agent is even invoked. This deterministic approach ensures every tool call is high value. It prevents the loop where an agent reads the same file repeatedly or wanders into irrelevant directories.

- Parallel Execution: Files are split into worker groups and concurrent sub-agents validate different parts of the change-set simultaneously without a linear time penalty.

- Silence is Success: Mesa is designed to speak only when a rule is definitively broken. If the code is clean, the system stays silent.

- The Exit 2 Penalty: If a rule is violated, Mesa blocks the session and forces an immediate fix while the agent's context window—and your own mental model—is still hot.

By moving the gate from the end of the process to the active coding session, we ensure that the code is already compliant by the time you are ready to commit. The thinking is done upfront, bounded by the rules you have already defined.

An Open Question: The Feedback Loop

As we move toward this model, we are curious about how it impacts how developers internalize standards. For us, this approach engrains best practices. Seeing the review run repeatedly as code is created exposes the developer to the rules over and over. This repetition makes it easier to recognize nuances and forces a better understanding of the system as a whole.

We want to see if real-time enforcement helps teams learn patterns faster. We don’t know the answer yet. But by moving these objective constraints into private feedback, we hope to liberate the Pull Request. It should be a surface for architectural collaboration and mentorship, not a graveyard of auto-generated nitpicks.

If you want to try Mesa Code Review 2.0 yourself:

brew install mesa-dot-dev/homebrew-tap/code-review

docs.