TECHNICAL WRITING

Code Review Was Built for a Slower World

February 20, 2026 · Noah Mitchem & Peter Rallojay

I mass-closed 30 AI code review comments last Tuesday. Not because they were wrong; most were valid catches. Because the agent that wrote the code was already gone, and I didn't feel like playing translator between two AIs that had never met each other.

That's the moment I realized something was off about how we review code now.

Most days I barely type code anymore. I open Claude Code, describe what I want, "add rate limiting to the upload endpoint, sliding window, Redis counters", and the agent builds it across four files in about 90 seconds. I scan the diff, adjust a return type, commit, move on. Fifteen, maybe twenty times a day. Describe intent, steer, review diffs. That's the job now.

Then I push a branch and open a PR.

And I wait.

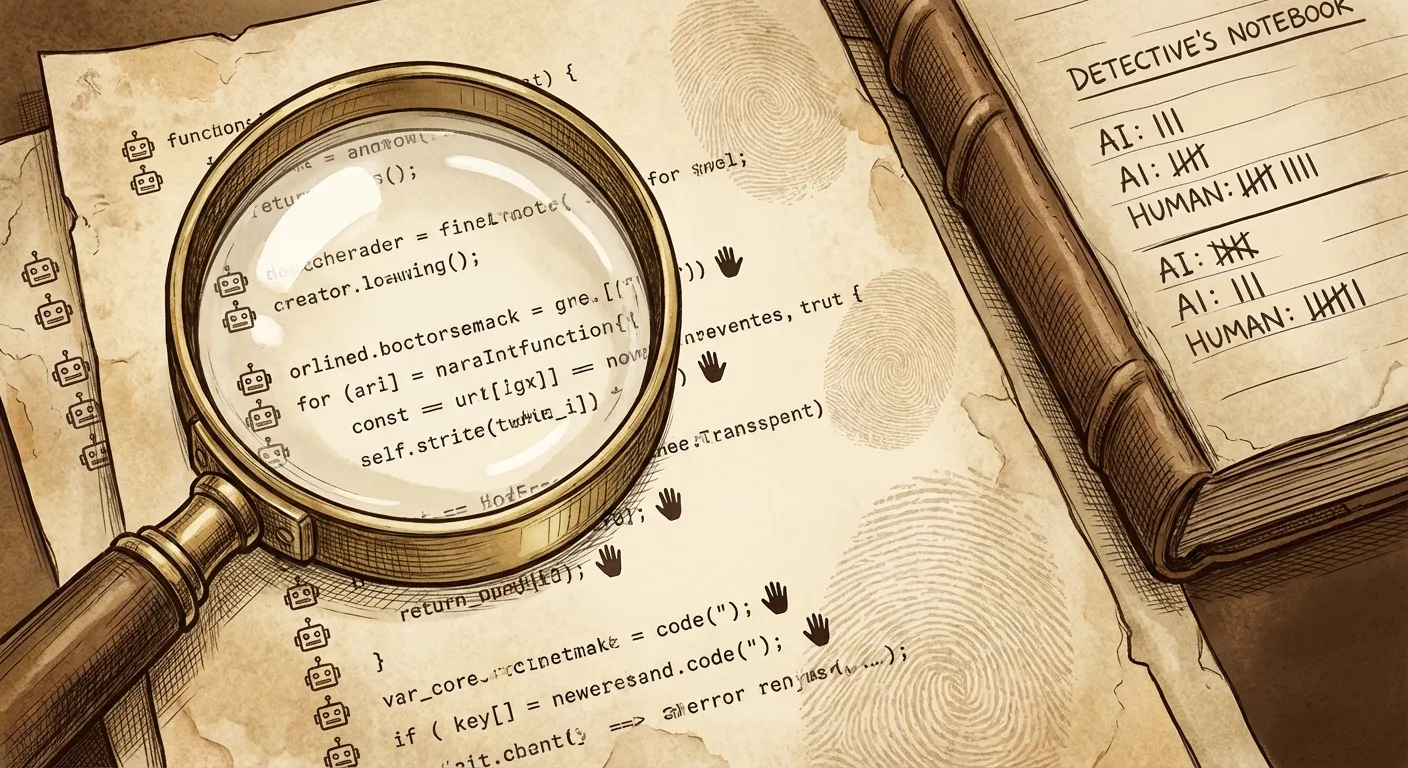

Five minutes later, Mesa's AI code reviewer wakes up. Other competitors too: CodeRabbit, Greptile, Qodo, take your pick, the market is mature and these tools are genuinely good. They parse the diff, reason about the code, leave comments. A missing error boundary. A naming inconsistency. A potential security issue in that new handler.

Valid feedback. Real catches. But here's the thing.

The agent that wrote the code? Its context window, the one that understood the task, the constraints, the reason it chose that approach, is closed. Gone. Garbage collected. The session that could have fixed these issues in seconds no longer exists.

So now I'm the middleman. I'm reading one AI's critique of another AI's work, trying to decide what matters, trying to reconstruct intent that lived in a context window I never saw. And if I spin up a new agent session to address the comments, that agent starts from scratch too. It doesn't know why the first agent made those choices. It just sees a diff and some review comments and does its best.

A relay race where every runner starts blind.

None of this is the reviewer's fault. These tools are doing exactly what they were designed to do. The problem is what they were designed for.

PR-based review assumed a world where:

- A human writes code over hours or days

- They push when they consider it "done"

- A reviewer reads the changes and starts a conversation

- The author responds, iterates, merges

Every one of those assumptions depends on the author persisting. Remembering decisions. Being available for the back-and-forth. Carrying context across the review cycle.

When the author is a 90-second agent session, every assumption breaks. The review still catches real issues, but it catches them at the most expensive possible moment. After the context needed to fix them has been destroyed.

What if the review happened while the code was being written, and you didn't even notice?

Picture your normal workflow. Same tools, same habits. But your team has defined its constraints, architectural rules, security requirements, coding standards, as plain files versioned in the repo. When the agent starts a session, those rules load into its context automatically. It knows what's allowed before writing a single line. You didn't configure anything for this session. You didn't run a command. It just happens.

You give it the same task. "Rate limiting, sliding window, Redis." It starts building. Halfway through, it writes a raw SQL query in a handler, catches itself, rewrites the line using the query builder, keeps going. You never saw the violation. You review a clean diff and move on.

That's the whole experience. There's nothing to watch, no dashboard to check, no comment thread to triage. The review ran in the same session, same filesystem, same git state, and the agent fixed it with full context of what it was building and why. By the time you look at the diff, the problem is already gone.

Because these are constraints, not suggestions, the feedback is binary. The agent doesn't weigh whether some comment is worth acting on. It either violated a rule or it didn't. No judgment call, no noise. Just clean code out the other side.

Plenty of developers are already moving in this direction, wiring review agents into Claude Code, Cursor, and OpenCode as part of how they work. The feedback happens where it matters, in the session, while they're building.

That doesn't replace what happens on the PR. We build a PR reviewer too, and teams rely on it every day. PR-level review serves a different purpose: it's where the team sees your work. Cross-cutting architectural concerns, intent verified against requirements, a readable summary for reviewers who weren't in the session. That's collaboration infrastructure, and it matters.

But for the individual developer, the person sitting in Claude Code right now, shipping their fifth feature of the day, the review that changes their output most is the one running inside the session. The one that fires right away. The one they never have to think about.

Most teams today have one layer of code review: the PR gate. It's valuable, but it was designed for a world where the author sticks around to hear the feedback. The teams shipping fastest have added a layer before it, constraints that run silently during development, so seamlessly that the developer forgets they're there. By the time the code reaches the PR, the easy violations are already gone, and the PR reviewer can focus on the things that actually need team-level attention.

Your agents are already writing the majority of your code. The question is whether your review process was designed for that, or for the world before it.

If you want to try our tool yourself, our docs walk you through setting it up.